Discord Forums: The Echo Chamber Trap for Player Sentiment

Article

A PM on a live-service game team opens the #feature-requests forum channel on Monday morning. Forty new posts, several with hundreds of replies, a few with triple-digit emoji reactions. She picks the top five by engagement, writes them up, takes them to the product meeting. By Friday, three of those requests are in the backlog.

Four months later, only one made it into a release. Not because the team failed, but because the other three weren't what the community actually wanted. They were what the forum's most active posters wanted, amplified by repetition and by the forum's own design biases, and filtered through a reading that ignored every channel that wasn't a forum.

This is the Discord forums echo chamber, and every studio that reads feedback mostly through their forum channels is in it.

Why forums feel like the truth

Forums look structured. Titled posts. Tags. Reply threads. First messages that read like proposals. Compared to the scrolling chaos of #general-chat, a forum channel looks like your community doing your job for you — they've organised their feedback, categorised it, even voted on it with reactions.

It feels like reading the community. It's actually reading the 3–5% of the community that writes forum posts.

Forums are a real tool and worth having (we've written about when they beat threads). The trap is treating them as the source of truth for sentiment, which they never are.

The repetition problem

The single biggest distortion: forum content repeats. When a player wants to request a feature, they usually don't search for an existing post about it. They open a new one. It's faster, the search is clunky, and maybe they vaguely remember seeing the same thread three months ago but can't find it. Or they saw it got closed with no response. Or they just think their framing is different.

So the same request shows up four, six, ten times — each as a separate post. If you're ranking by post count or recent activity, the theme gets counted multiple times, artificially amplifying its apparent importance. Meanwhile, a well-argued post that happened to land at a quiet moment looks unimportant: one post, a handful of replies, and then it's buried by the newer duplicates.

The real signal about how much the community cares about an auction house isn't ten separate posts titled "please add an auction house." It's those ten posts collapsed into one theme plus every casual mention of auction houses across every other channel.

The over-weighting problem

The second distortion: forum posts look like signal. A 500-word titled post with 80 replies and 30 upvotes is cognitively heavy. It reads like serious feedback. Three hundred messages in #general about the same topic — short, unpolished, mid-play venting — look like noise.

But the #general discussion is often more representative. It's broader. It includes the players who would never bother writing a forum post, which is most of your community. That silent-majority group is exactly the one whose retention you're trying to protect.

If your feedback pipeline is "forum posts → backlog," you're systematically privileging the players who post in forums and discounting everyone else.

The counter-narrative problem

Every forum post has a narrative: usually the author's. If the OP says "the new economy system is broken, here's why," the reply thread engages with that framing. Some agreeing, some disagreeing — but posters self-select. People who disagree with the OP's premise often don't reply at all. They go back to the game, complain in #general, or shrug.

So the counter-narrative is either underrepresented in the reply thread, or it's sitting in chat channels where you weren't looking. Either way, if you judge the temperature of a feature by its forum thread alone, you're reading one side of a conversation and calling it the whole.

The manual process that didn't work

A real version of this: one Accord customer — a mid-sized studio with a very active Discord — was running their feedback pipeline manually like this:

A community team member opened each new forum post in

#feature-requestsand#bug-reports.They counted the replies, counted the emoji reactions, wrote the post's title, author, approximate sentiment, and associated theme into a row of a master spreadsheet.

The spreadsheet got forwarded weekly to the product team.

The process broke in three predictable ways:

The spreadsheet went stale. New replies came in, emoji counts changed, new duplicate threads appeared. Keeping it current meant re-opening every post every day. Nobody had time; the file drifted further from the real state of the forum every week.

The spreadsheet missed general discussion. It only captured structured forum data. Anything said in

#general-chator other channels about the same theme simply wasn't in the dataset the product team looked at. Half the relevant conversation was invisible by design.There was no counter-narrative column. "In favour" was the whole row. If 40% of the community thought the proposed change was fine, that 40% wasn't represented anywhere in the document product decisions were being made from.

Those decisions got made anyway. The roadmap reflected the spreadsheet, and the spreadsheet reflected the forum, and the forum reflected the most motivated posters. Three degrees away from the actual community.

What actually tracks forum sentiment

A usable forum-sentiment pipeline has to solve four specific problems:

1. De-duplicate themes across repeated posts. Ten "please add an auction house" threads are one theme, not ten. The pipeline needs to recognise semantically similar posts — by title, body, and tag combination — and treat them as a single trackable topic with one timeline, one reply corpus, and one evolving view over time.

2. Include general discussion. Whatever classification runs on forum posts has to run on chat channels under the same topic definitions. If "auction house" is a Topic, every mention of it in #general, #suggestions, #off-topic, thread replies, and forum replies flows into the same bucket. Structured and unstructured feedback are the same feedback; treating them as separate datasets means you only ever see half.

3. Rank by more than post count. Raw message volume privileges loud minorities. Ranking by ratio — what share of each player's messages are about the theme, what share of each cohort's conversation it represents — tells you whether a theme is proportionally important. Adding emoji weight, unique-author counts, and sustained activity filters spiky one-day outrage from real ongoing shifts.

4. Surface the counter-narrative by default. Every theme should show the supportive sentiment and the disagreement, qualifications, and "it's fine actually" responses from elsewhere in the server. Otherwise every product brief gets written from one side of the argument.

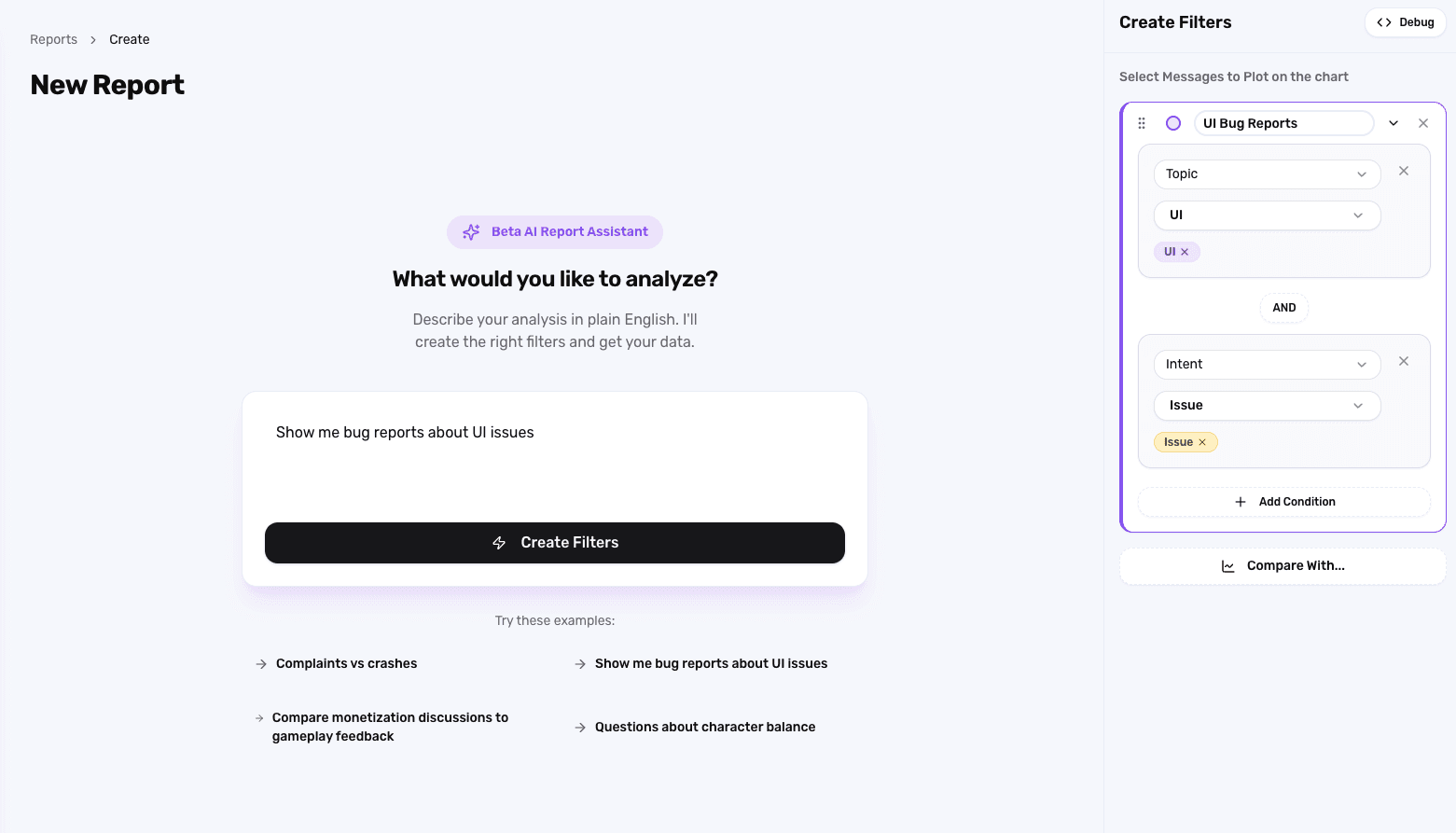

How Accord does it

Accord's classification runs across every message in your server — forum posts, forum replies, thread messages, chat channels, general discussion — under one unified Topic taxonomy. The auction-house theme is one bucket whether it came from a structured forum post or a throwaway comment in #general. Specifically:

Semantic matching groups duplicate forum threads into single Topics automatically. Ten separate "please add X" posts become one Topic with ten contributing threads, a complete timeline, and the full corpus of replies across all of them.

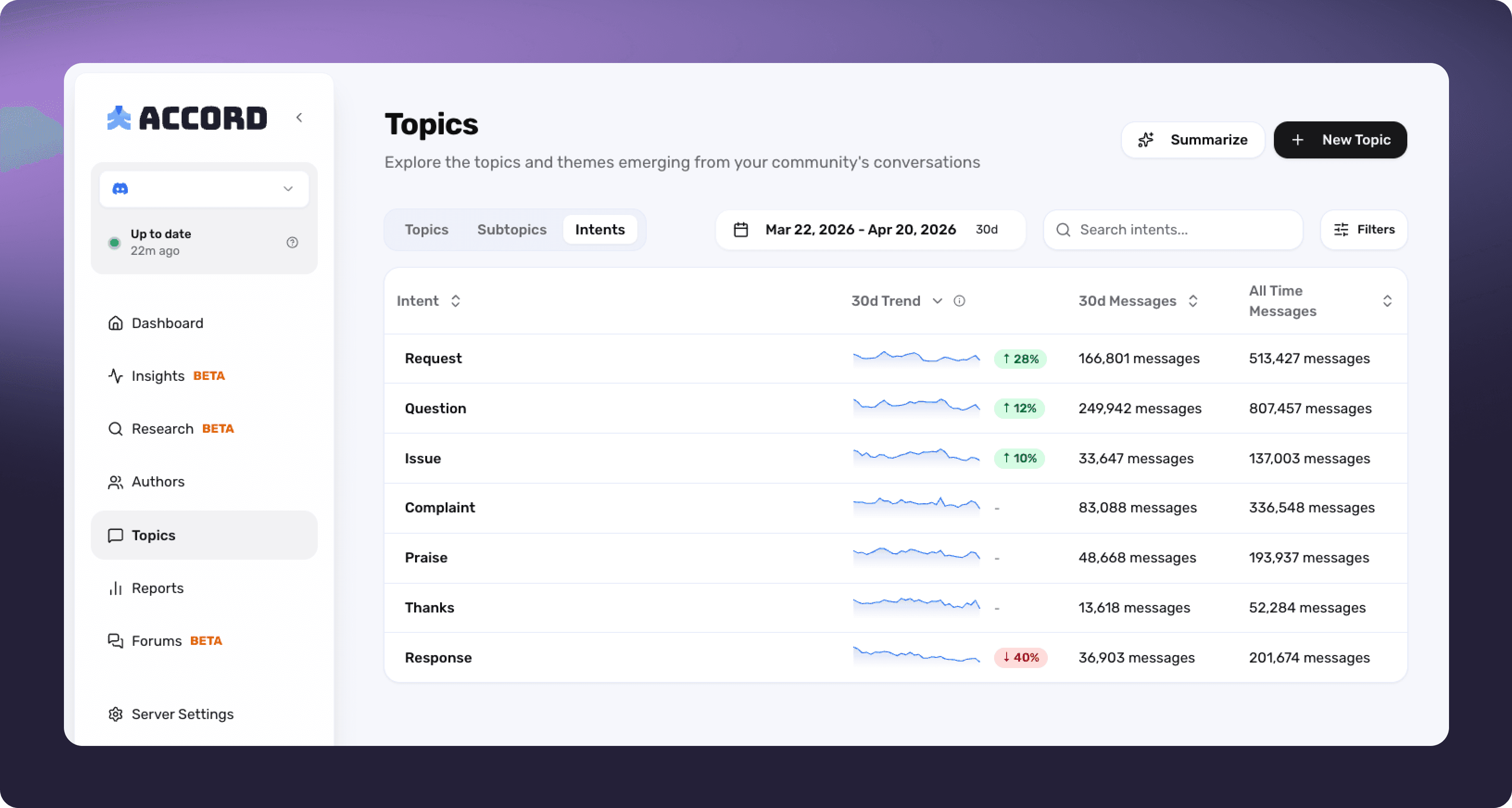

Cross-channel intent classification labels every message — not just forum posts — by Intent (Complaint, Request, Issue, Praise, Question, Thanks, Response). A theme's full signal includes the chat-channel commentary, not just the structured posts.

Multi-factor ranking combines message volume, unique-author counts, emoji reactions, and ratio-of-messages per author. You can rank the Most Negative by what share of a user's messages are Complaints, not by raw complaint count — the filter that stops you from mistaking a handful of loud users for a trend.

Counter-narrative surfacing is built into Breakdowns. Split any Topic's chart by Intent and Praise, Response, and Question messages show up next to the Complaints. If the community is actually divided, you see both sides in one view rather than discovering the dissent six weeks into the build.

Drill-down without losing the whole. Open the auction-house Topic and you see every forum thread contributing to it, every chat message classified under it, every reply, every emoji. Zoom back out and see how the theme trends over 30 days compared to every other feature discussion on the server.

What used to be a stale spreadsheet built from one channel becomes a live, cross-channel view with the counter-argument included by default.

The read-back for PMs and community leads

The practical test is simple. For any theme you're about to pull into the backlog, can you answer — in under a minute — "who's in favour, who's against, and how does this look across the whole server?"

If the answer means opening each forum post one by one, reading #general separately, cross-referencing in a spreadsheet, and trusting that the top-voted thread represents the community's actual view, you're losing a day a week to organisation overhead and still missing half the picture.

If the answer is one chart with a Breakdown, a counter-narrative already surfaced, and a summary you can copy into a product doc in thirty seconds — that's the time you get back. That's what the community team gets to spend building the right thing instead of transcribing the wrong one.

Forums are useful. They're not the whole community. The studios that ship the right changes are the ones reading both.

See what Accord surfaces in your Discord community — book a demo.